Deep neural networks are frequently utilised in pattern recognition tasks where it is impossible to generate a quantitative, human-comprehensible description of the data generation process. Neural networks frequently result in an abstract (entangled and unintelligible) representation of the data-generating process in the process. Due to the fact that physicists typically expect their studies to produce quantifiable data on the system they examine, this may be one of the reasons why neural networks are not being used extensively in the signal processing for physics experiments.

Regression, classification (for example, in picture or speech recognition [1, 2]), and time-series analysis are just a few of the tasks that deep neural networks (DNNs) have been effectively used to. In many applications, they are renowned for being able to build valuable higher-level features from lower-level features. These feature representations are often still incomprehensible to humans, though.

This characteristic is one of the reasons DNNs are not more commonly employed in physics, because data exploration methods are typically very different.

Physical models, often known as equations of motion, are effective in describing the majority of systems studied in physics. The experimental data are examined in light of a specific model. A theoretical explanation of the data-generating process is produced as a result of solving the equations of motion analytically or numerically. Typically, a collection of mathematical variables that can be changed to span the data are included in the final model. In most cases, the true values of these variables are unknown, hence they must be found. We refer to them as latent parameters because of this. By comparing the data and the model, usually by fitting the model to the data, the true latent parameters are approximations.

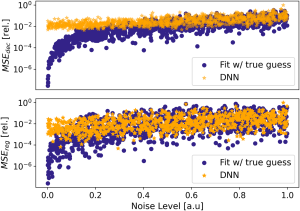

DNNs were recently trained on synthetic nuclear magnetic resonance (NMR) spectroscopic data, simulated by accurate physical models. The large amount of labeled data generated this way enables convergence of the DNN, which is then used to process real NMR data with great accuracy. A similar approach, that is, starting training with synthetic data and continuing with real-world data, has become popular in robotics and autonomous driving.

Although frequently very successful, the majority of DNN applications in physics that we are aware of are focused on classification issues. In addition, despite being the most typical type of data produced during physics experiments, DNNs are still infrequently used for time-series studies.

Because training is done on arbitrarily large volumes of synthetic data, raw performance could be improved by increasing the number of trainable parameters such as adding more layers or neurons, without too much concern for overfitting. The architecture itself could be augmented by adding an upstream classifier DNN-module, which could identify the type of signals being analyzed. Classified signals could then be processed via specialized versions of our architecture, trained on the corresponding type of signals.

Referral links:

- https://www.xenonstack.com/glossary/deep-neural-networks

- https://journals.plos.org/plosone/article?id=10.1371/journal.pone.0268439

JAYA SAI SRIKAR

21VV1A1206

Information Technology